Google experiments might be leveraged to optimize your Google Play Retailer itemizing. It doesn’t matter what you’re A/B testing, efficiently operating Play Retailer experiments is completed with the identical course of. There are two primary targets to including a promo video to your Play Retailer itemizing.

Table of Contents

- guide to A/B testing

- guaranteed app store ranking

- google play short description aso

- google play store search results optimization

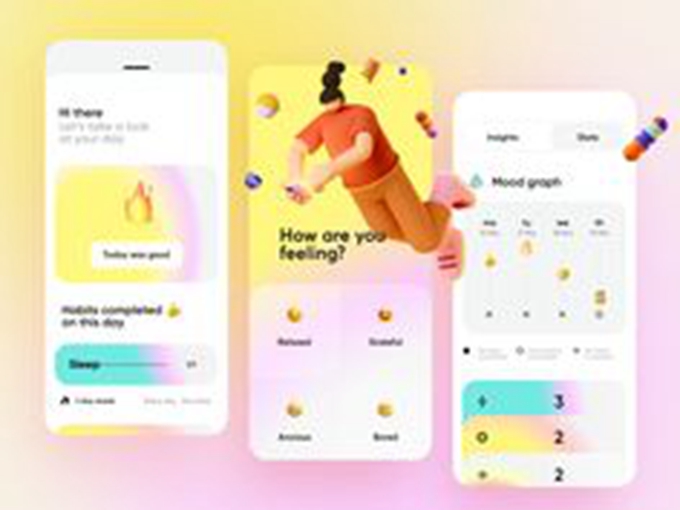

The primary one is to enhance conversion on the product web page: get extra installs for a similar quantity of retailer itemizing guests. A video completed the suitable means that exhibits what the app/sport is all about and the way it can deliver worth to the consumer ought to assist persuade the potential consumer to obtain the app.

In case your retention charges are beneath these, there may be additionally room for enchancment (supply: Lab Cave Video games)

The second is to enhance engagement and retention after the set up. A brand new consumer that has watched the video earlier than downloading the video has a greater concept of the worth the app/sport can deliver him and is extra more likely to discover his means round or preserve taking part in.

- What sort of affect are you able to anticipate on conversion charge?

- Introducing Google experiments

- Earlier than going additional

- Step 1: Add your promo video to YouTube

- Step 2: Create the Google Play Retailer itemizing experiment

- Step 3: Don’t look!

- Step 4: Analyze the outcomes

- Step 5 (optionally available): Pre-post evaluation of conversion charge

- Step 6 (optionally available): Carry out a B/A check (counter-testing)

- Step 7 (optionally available): Tweak/optimize your promo video

- Measuring the affect on retention

- Conclusion

You’re having a video produced proper now or we simply delivered it?

You is perhaps questioning how one can assess the affect of your promo video on the Play Retailer. How will you know if the video helps?

Here’s a step-by-step information on how we advise to measure the affect.

Observe: A LOT of the recommendation on this submit is basic and might be utilized to experiments with different itemizing’s attributes (icon, screenshots, characteristic graphic, and so on.) and even to different A/B exams. However this submit has a selected deal with Google experiments with the video attribute.

WHAT KIND OF IMPACT CAN YOU EXPECT ON CONVERSION RATE?

We’ve had a number of purchasers that noticed their conversion charge enhance when including a brand new promotional video in your Android app.

Generally they already had a video on the Play Retailer, and typically they weren’t utilizing video but.

The identical outcomes can’t be assured. However measuring that is additionally step one to optimizing your video in case it didn’t carry out in addition to anticipated (Step 7).

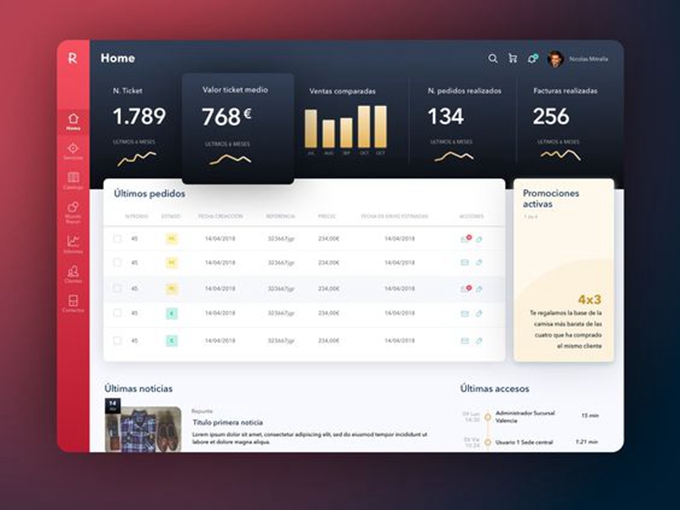

INTRODUCING GOOGLE PLAY STORE LISTING EXPERIMENTS

Google affords a instrument within the Google Play Developer console referred to as Retailer itemizing experiments.

With Google experiments you may A/B check adjustments to your retailer itemizing: a subgroup of the Play Retailer guests will see model A, one other subgroup will see model B.

You then examine which variations acquired extra installs.

The important thing options of retailer itemizing experiments (supply: Google)

BEFORE GOING FURTHER

Which localization(s) do you have to begin on?

It’s nice to have the ability to localize video on the Play Retailer.

If in case you have a big consumer base that speaks one other language, you may tailor the video to them.

With retailer itemizing experiments, you may run exams for any localization in as much as 5 languages concurrently.

That mentioned, except you will have a fantastic quantity of downloads it is best to begin A/B testing with just one language (instance: EN-US).

Figuring out your goal

Within the case the place you don’t have already got a video in your Play Retailer itemizing, what you wish to A/B check first is:

- Model A (Present Model): no promo video

- Model B (Variant with video): very same itemizing however with a promo video

If you have already got a video in your itemizing, what you wish to A/B check first is:

- Model A (Present Model): present promo video

- Model B (Variant with video): new promo video

After operating the experiment your goal is to find out whether or not video helped convert extra visits to installs on the Google Play Retailer, and by how a lot.

So earlier than getting began you wish to outline:

- The variable: the one factor that will get modified (video vs. no video, new video vs. present video)

- The consequence: the anticipated consequence (by how a lot you imagine it ought to change the conversion charge)

- The rationale: why you suppose it ought to change the conversion charge

Attempt to preserve advertising/acquisition efforts comparatively constant

What the itemizing experiment measures is what’s taking place within the Play Retailer solely. However loads of exterior components can have an effect on the outcomes.

The primary one we’ve recognized (so long as your Play Retailer itemizing stays the identical) are a giant advertising or promoting push. If in case you have important adjustments in promoting/advertising campaigns, then you definately run the dangers of getting totally different behaviors from guests in your Play Retailer itemizing: they may have already made their determination on downloading the app when getting there.

As a result of Google doesn’t differentiate by forms of installs (Natural Search, Natural Browse, Third Celebration referrers like advert networks. and so on.) this may subsequently change the “regular supply combine” of tourists which can have an effect on the adjustments/experiments you make (together with video). Particularly in the event that they’ve already seen a video advert and are very clear on the added worth of the app!

We perceive conserving advertising/promoting the identical won’t all the time be potential, however at the least preserve this in thoughts.

What’s your present conversion charge?

To your app yow will discover these conversion charges in your Acquisition studies of your Google Play Developer Console.

Right here is how they appear:

Total and natural solely conversion charges for all nations might be present in your report whenever you “Measure by Acquisition channel”

Take a observe of every conversion charge for the earlier week. You may as well pay attention to the general and natural conversion charges for the nation the place you intend on doing the check (in our case, United States since we’re selecting EN-US as language).

You will run an A/B check however you continue to wish to know what these conversion charges are earlier than, throughout and after the check (see Step 5 as properly).

What quantity of downloads do you want (and pattern measurement)?

To your experiment you should have a pattern measurement that’s large enough for Google to find out statistical significance (a “winner”).

Most individuals say you want hundreds of installs for every model with a purpose to get dependable outcomes.

That will help you out in figuring out your pattern measurement, take a look at this helpful Pattern Measurement Calculator. Right here is an instance of parameters you could use:

Essential: the parameters indicated as “would possibly want to regulate” would possibly have to be totally different in your case (see rationalization beneath).

A number of the parameters you should outline your pattern measurement are fastened (significance stage: 10%) or fairly easy (your conversion charge).

Others, nevertheless, will rely upon how a lot you should watch out about making adjustments:

- Minimal Detectable Impact (MDE)

- Statistical energy

If you happen to simply launched or if you happen to’re a startup with a couple of downloads and also you’re prepared to take extra dangers, you may have much less stringent parameters for MDE and statistical energy. What we have now within the image above are nevertheless fairly “regular” values (MDE: 5%, statistical energy: 80%).

If in case you have a profitable app that has been within the retailer for a very long time and has nice rankings, run a extra delicate check with extra stringent parameters. You may for instance lower MDE and enhance the statistical energy. Your pattern measurement wanted will enhance however your outcomes can be extra exact.

Within the instance above, you want at the least 19,907 guests for every variant, so 39814 whole. If you happen to at the moment have a conversion charge of 20% like above then that may imply 7962.8 downloads whole (or 3981 per variant – with solely 2 variants as mentioned right here).

Working an experiment with out ready for this quantity of downloads would make the check outcomes unlikely to be correct. So now let’s see how a lot time you should run your experiment.

How lengthy do you have to run your retailer itemizing experiment?

In fact this reply is tied to the amount of installs you will have outlined above.

As seen, we’d like a quantity of 4,000 installs (rounding up the 3981 above) per variant. Let’s say that you’ve got 5,000 installs per week, you’d have:

- 1 week check: 2,500 installs for every model.This isn’t sufficient.

- 2 weeks check: 5,000 installs for every model.This could give extra dependable outcomes.

So if you happen to get 30k downloads per week do you have to simply run an experiment for two days?

Google says you ought to be affected person

The reply is not any. Play Retailer guests’ habits can range over the course of the week or on the weekend, so we advise to at the least make your experiment final a full week.

When you’ve set your pattern measurement and subsequently how lengthy your experiment ought to final, follow it (see Step 3).

Why it is best to NOT check greater than 2 variations on the identical time

Play Retailer itemizing experiments permit you to do extra T than simply A/B exams: it permits to separate check as much as 4 variations (present model + 3 variants) on the identical time.

We advise (and we’re not the one ones) to maintain your testing to solely 2 variations (present model + 1 variant). It’s not solely about getting (extra steady) outcomes sooner. It is going to additionally make it simpler to analyse.

As Luca Giacomel from Bending Spoons explains “the true cause for not doing a number of A/B exams in parallel is that every one of them will yield a decrease statistical confidence as a consequence of a really well-known statistical downside referred to as the issue of “a number of comparisons” or “look elsewhere impact“.

So…Belief us. Don’t overcomplicate issues.

STEP 1: UPLOAD YOUR PROMO VIDEO TO YOUTUBE

A Google Play Retailer video is a YouTube video.

So to have the ability to add a promo video to your Play Retailer itemizing, you first must add it on YouTube.

As you recognize, there are 3 choices on YouTube: public, unlisted and personal. You cannot use non-public for apparent causes, so let’s check out the opposite two.

The benefit of an unlisted video is that you recognize that probably the most a part of the views (usually > 90%) are from people who noticed the promo video on the Google Play Retailer. This makes your YouTube analytics far more significant and permit you to get insights on Google Play Retailer guests’ habits/engagement along with your video(s).

When unlisted, many of the views on the video come from the Google Play Retailer (“exterior”)

If the video is public, then you may see the site visitors sources. However you gained’t have the ability to analyze the necessary video metrics by supply (extra on that within the final a part of this submit).

The benefit of a public YouTube video is that in case your app itemizing will get loads of site visitors your video can shortly collect hundreds of views that then make it rank higher for search on YouTube (the second search engine after Google). And even on Google.

Generally we advise to begin along with your video(s) unlisted, at the least till you’re assured it’s there for some time (i.e you aren’t optimizing/testing the video half for some time).

STEP 2: CREATE THE EXPERIMENT

Open your Google Play Developer Console and go to the “retailer itemizing experiments” part within the “Retailer presence” part.

Click on “New Experiment” then, choose the specified language within the “Localized” part.

The explanation to pick out “Localized” is that you simply wish to check solely on customers that talk the language wherein your video is, so the habits of the others don’t have an effect on the experiment.

Create the experiment by giving it a reputation and selecting on how a lot of your viewers will see the experiment. Select the utmost allowed of fifty%.

You then choose what you’d like to alter in comparison with your present itemizing. On this case, choose solely “Promo video”.

Identify your Variant (instance: Video) and paste the hyperlink of the YouTube URL in your video.

That’s it!

Now you can run the experiment (preserve studying although).

STEP 3: DON’T LOOK!

That is the toughest half.

Your experiment is operating and it is vitally tempting to return every day to get a way of which variant is performing higher.

Trying on the knowledge won’t change the outcomes per say, however how a lot do you actually belief your self in case the experiment will not be performing in addition to you hoped (or worse)? Will you have the ability to preserve it going?

It’s finest to stay to your deliberate check interval, and steer clear of that rattling experiment. Even when Google tells you the experiment is full.

Why?

In tremendous brief: due to false positives. You would possibly suppose (and Google would possibly inform you) that the experiment is profitable however because you haven’t reached the pattern measurement outlined it’d truly not be the case.

The complete stuff: if you happen to actually care to grasp why, take a look at this submit and skim on significance ranges and false positives.

STEP 4: ANALYZE THE RESULTS

OK so the check interval is over and the pattern measurement has been reached. Now could be the time to see if the variant carried out higher.

(supply: TimeTune)

Present Installs is the variety of precise installs for every model. Scaled Installs is what you’d have gotten if just one model was operating. Right here it’s twice as a lot as a result of we’re working with a 50-50 break up.

If Google exhibits a winner

Efficiency for the variant will not be absolutely constructive

If you happen to had been to re-run the experiment 10000 instances, then 90% of the instances you’d get a consequence that can be on this -3.3%-6.1% vary

→ DO NOT APPLY the change

Efficiency for the variant is absolutely constructive (>0)

If you happen to had been to re-run the experiment 10000 instances, then 90% of the instances you’d get a consequence that can be on this +4.5%-15.3% vary

It additionally implies that the opposite 10% of the instances you would get a consequence that may not be on this vary.

→ DO APPLY the change

Observe: Bear in mind the Minimal Detectable Impact (MDE) parameter you selected in the beginning?

Because it impacts how huge the “grey space” is, if the MDE is huge then watch out with making use of a change that gave you a consequence vary too near 0 (even when constructive).

You might be much less assured within the outcomes if you happen to get this type of vary,

particularly in case your MDE is 5% or greater.

If Google says it wants extra knowledge

Once more, be very cautious: usually Google will say there’s sufficient knowledge after 2-3 days then revert again to “want extra knowledge”, therefore why defining the pattern measurement earlier than the check is necessary (in addition to following Step 3!) .

If Google says that the experiment will not be over as a result of it wants extra knowledge after the pattern measurement has been reached, think about the experiment inconclusive to this point.

→ DO NOT APPLY the change

Observe: If the pattern measurement has not but been reached and Google says it wants extra knowledge, then it most certainly means you haven’t learn Step 3. So await the pattern measurement to be reached and preserve the experiment operating.

STEP 5 (OPTIONAL): PRE-POST ANALYSIS OF YOUR CONVERSION RATE

We talked in the beginning concerning the conversion charge benchmark that Google provides you, so you recognize the place you stand and the way a lot you would enhance examine to different apps in the identical class.

To additional validate the outcomes of your A/B check and see what truly occurs as soon as the change is applied, take a look at the conversion charge share in the course of the week interval earlier than the check and examine it to the conversion charge in the course of the week after the check interval.

To do that, it means it’s a must to maintain out on operating one other experiment throughout that point.

The conversion charge within the conversion benchmark is just for Play Retailer (Natural) guests and contains all localizations/nations.

If you happen to selected to do a localized experiment like we urged, then as an alternative take a look at the conversion charge for the corresponding nation/language.

For sure, what you wish to see is (all nations and for the focused nation/language):

Total conversion charge earlier than experiment > Total conversion charge after experiment

Natural conversion charge earlier than experiment > Natural conversion charge after experiment

If you happen to’re not observing this, verify the parameters/pattern measurement you used and the outcomes of the A/B check. Both run the identical check once more, or think about performing a B/A check.

STEP 6 (OPTIONAL): PERFORM A B/A TEST (COUNTER-TESTING)

As we’ve seen above, it is best to solely apply the change if the uplift is critical.

Thomas Petit (Cell development at 8fit) warns that the B/A check methodology described beneath will not be legitimate from a pure statistical perspective, however may assist discard the most important errors. So he nonetheless recommends it. Try his presentation and slides on A/B testing your retailer itemizing.

For a B/A check you’d invert the variations:

- Model A (Present model): promo video on the itemizing

- Model B (Variant with no video): very same itemizing however no video

Run the experiment the very same means. If the identical type of efficiency is obtained, this can enhance your confidence within the outcomes.

STEP 7 (OPTIONAL): TWEAK/OPTIMIZE YOUR PROMO VIDEO

There is perhaps room for enchancment in your video. In fact that is very true if the outcomes of the check weren’t constructive.

Analyze YouTube Analytics

Though you can not know (but?) who watched the video amongst guests that put in the app, looking on the primary metrics in YouTube Analytics can assist you get a way of how partaking your video is.

Here’s what you wish to take a look at:

- Variety of views

- Common view length

- Common share considered

An fascinating chart to have a look at is the “Viewers Retention” chart: it allows you to establish how shortly you’re “shedding” watchers.

If you happen to see any sudden drops, attempt to take into consideration how you would tweak the video to counter this.

Observe: you are able to do the identical factor with the YouTube movies you’re utilizing in your UAC campaigns.

Issues you may attempt

What sort of tweaks are you able to attempt to optimize the video?

First, make a abstract of your video construction. One thing like:

- 2s animated telephone with brand, icon and tagline

- 5s about worth proposition 1

- 5s about worth proposition 2

- 3/4s CTA

Within the instance above, one of many first issues you would attempt is to utterly take away the emblem in the beginning: your branding is already on the Play Retailer itemizing (icon, app title, and so on.).

You may as well attempt to lead with a unique worth proposition. And naturally, you may determine to go together with a very totally different idea.

A few suggestions and finest practices:

- Optimized for small display screen– your video can be performed on a cell gadget, so it ought to look good and be comprehensible even when small. Suppose zoomed-in gadget, and so on.

- Put an important profit/worth proposition first– there may be completely no cause to guide with the rest. Your video doesn’t have to begin the identical means the consumer journey begins.

- Make it comprehensible with no sound– loads of guests won’t have the sound turned on, so use copy/texts. It’s a YouTube video however they’re on the Play Retailer, so not the standard YouTube consumer habits (sound on).

- If you happen to use video for a number of languages,be sure that it’s localized

MEASURING THE IMPACT ON RETENTION

For the second objective talked about in the beginning of this text (growing retention), measuring is sort of difficult. The one choice to this point appears to be a pre-post evaluation (earlier than vs. after).

Since Google added retention info (Day 1, Day 7, Day 15, Day 30 – cf. charts above), you may observe this metric exactly.

Nonetheless you can’t A/B check on cohorts over time and preserve monitor of two teams of customers: those for which the video confirmed up within the Play Retailer itemizing and those for which there was no video. This subsequently makes it an actual problem to measure.